Mobile App Security: Best Practices on Android & iOS

Mobile apps often deal with really private and sensitive user data like personal health information or banking information. Losing data or getting hacked, therefore, can have huge consequences. There is no bigger nightmare for any app developer than knowing that his application was involved in a huge data leak scandal and user data was stolen.

We at QuickBird Studios work on many apps in the health and medical sector which handle very sensitive data that shouldn’t end up in the wrong hands. We see it as one of our most important duties to secure our users’ data.

In this article, we collected best practices to keep your users’ data safe and want to share them with you. We are going to talk about how we can implement mobile app security for storing data, communicating between client and server and other aspects like logging and analytics.

If you want even more specific advice, read our articles on iOS app security and Android app security.

The basics of Mobile App Security

Collecting a lot of data brings you in a position of great responsibility. If any data gets lost or leaked it’s going to reflect on you. That’s why your mobile app should only collect data you need for your application to work properly.

The most secure data is the data you don’t collect.

Besides being cautious collecting data, it is also very important to be fully transparent with which data you collect and for what you are going to use it. Be clear which data is stored where and how the users can access their data. Next to informing your users, you should give them also the ability to control which data is collected. Also, provide a way to delete their personal information from your service.

Thanks to the General Data Protection Regulation (GDPR), released by the European Union, all software manufacturers are also legally reliable to make sure to protect users’ data and give them full transparency and control over all their collected data.

Security for storing user data

Storing data in your mobile apps is one of the most common tasks you will encounter as a mobile developer. Still, a lot of people don’t do it right and don’t follow the best practices. iOS and Android are aggressively helping you with this task by running your app in a sandbox and enabling basic data encryption by default. But this is not enough and should be improved when working with sensitive user data.

In general, we can advise using system features as much as possible. A general rule of thumb is that using always the highest level API that meets your needs is the way to go. Especially cryptography is difficult and the cost of bugs typically so high that it’s rarely a good idea to implement your own cryptography solution.

App Sandbox

All apps running on either iOS or Android run in a secure place called “sandbox”. The application sandbox is a set of fine-grained controls that limits the app’s access to the file system, hardware, user preferences, etc. Even though the sandbox systems of iOS and Android are different, they share a lot of common ideas. All of the app’s data including preferences and files are stored in a unique sandbox directory per app. Generally, only your app has direct access to this folder. No other app can access it.

Both operating systems have ways to overcome the limitations of the app’s sandbox. For example, the apps can request privileges to get access to the user’s photo library. You should be careful with these features though. Hackers that can hijack your app can use your privileges to access these files. Don’t request more privileges/permissions than you actually need.

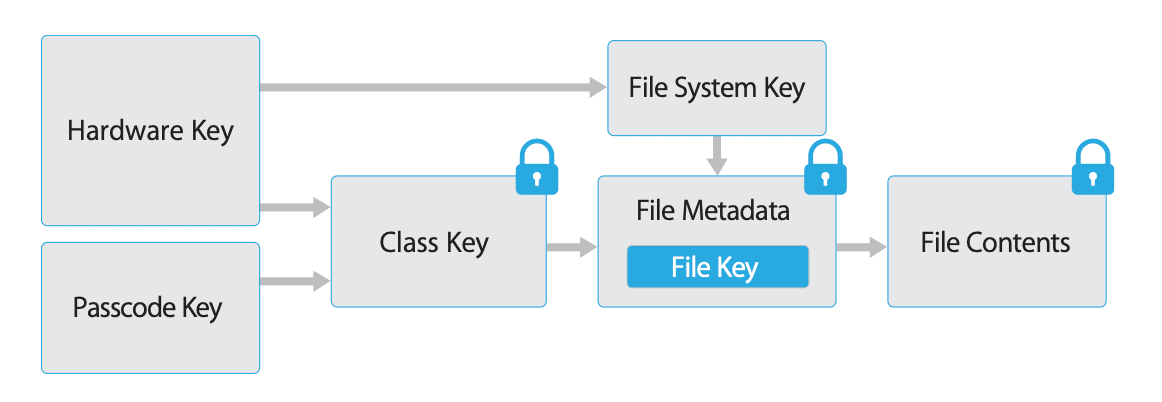

File encryption

Newer OS versions of iOS and Android have file encryption built-in and enabled by default. Even if someone has access to your physical phone they are are not able to read the data without decrypting it using some kind of key, e.g. the passcode of your smartphone.

On both operating systems you can configure this file encryption by defining file-level configurations. They define how files are protected and when they get decrypted. The encryption and decryption processes are automatic and mostly hardware-accelerated. They have therefore only a minimal performance impact.

File Encryption in lower OS versions

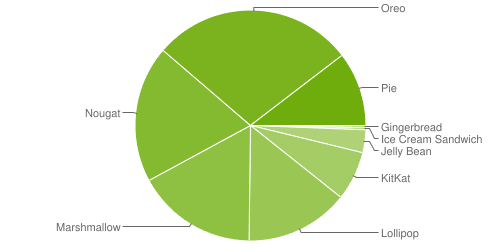

Android device fragmentation in a 7-day period ending on May 7, 2019

If you plan to support older OS versions (especially older Android versions) the operating system doesn’t offer as many security mechanisms as for newer OS versions. That’s why you will need to install additional security mechanisms for these users.

Over 40% of the active Android users (according to Google) run OS versions lower than 7.0 and therefore don’t support system-wide file-based encryption. All devices running Android 4.4 or higher (96,2%) support at least full-disk encryption.

To give users of lower OS versions the same level of security you should, therefore, encrypt your application’s data on your own. We will introduce you to concrete implementations techniques for this in our upcoming iOS and Android in-depth security articles.

Encrypted key-value storage

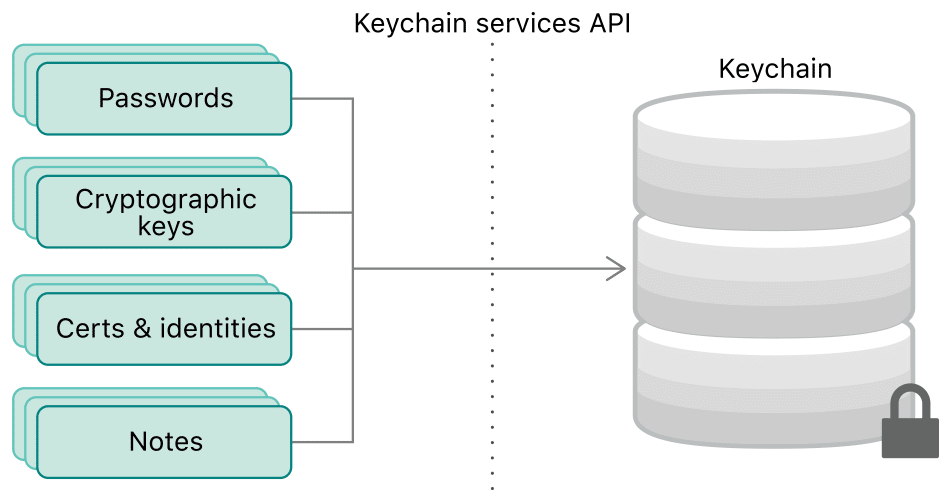

Sometimes you need a secure place to store small chunks of data like passwords or certificates. iOS and Android provide both a secure data storage called Keychain/Keystore that encrypts all of its content on the device and prevents other apps from being able to extract those keys. You don’t need to store any encryption keys in your app and can rely on the system to provide the highest security.

These secure stores are your go-to solution to store small chunks of data. They are the secure key-value storage replacement for things like SharedPreferences on Android or NSUserDefaults on iOS. These unencrypted key-value stores should be avoided for this kind of data.

Secure Data Transportation

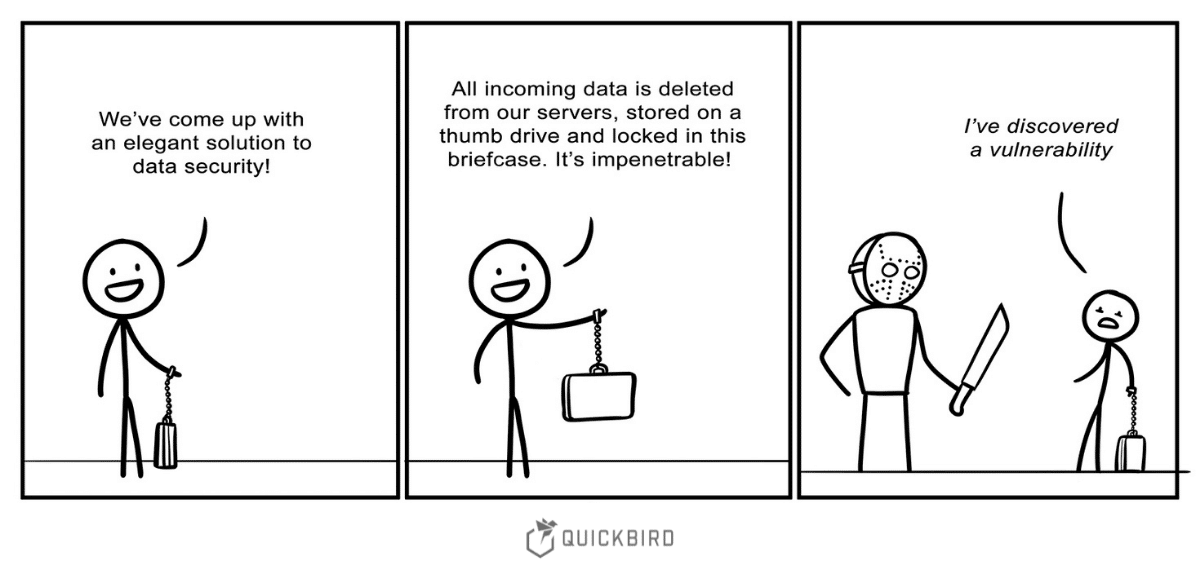

In general, it’s a good idea to keep as much data on the device if you don’t need it on your servers. Hacking the user’s device gives Hackers only access to the information of that specific user. This is, therefore, not as much of a hack-worthy target. If a hacker is able to get access to the app’s server, however, he is able to get access to all of the users’ data. That’s why you should think twice before putting sensitive data into such a high-value hacker target. You can never be sure that your server or system does not have an undiscovered security flaw.

Besides making sure the server doesn’t get hacked, it is also really important to make the data transportation as secure as possible:

HTTPS over SSL / TLS

This should be the standard for any communication between your app and your server. HTTPS encrypts all messages sent between client and server and protects them against simple man-in-the-middle attacks.

HTTPS is relatively easy to add to your server and with services like Let’s Encrypt also free of charge. On the client-side, there is nothing to do for you as a developer, because the TLS/SSL protocol will be handled by the operating system.

In fact, iOS and Android expect you to use HTTPS by default. This security mechanism can be disabled completely or you can define exception domains if there is no way around that. Anyways, if you control the server you should make sure to use the newest TLS version to make use of the current state-of-the-art encryption mechanism.

HTTP Strict Transport Security

The counterpart to this HTTPS enforcement on the client is the HTTP Strict Transport Security protocol for your server. It is a web security policy to make web-servers accessible only via secure connections and to protect against cookie hijacking or protocol downgrade attacks. Use it so that malicious clients cannot downgrade to an older TLS/SSL mechanism with well-known security vulnerabilities.

SSL Pinning

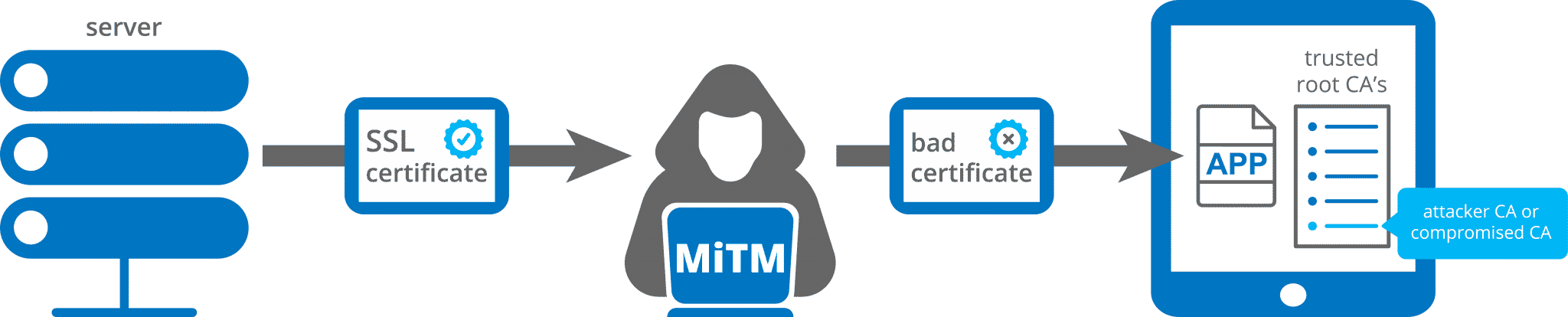

HTTPS is encrypting all of your messages and makes communication safe, but it doesn’t protect you from complex man-in-the-middle attacks.

By default, the operating system has its own certificate trust validation that checks if the certificate was signed by a root certificate of a trusted certificate authority. If someone has full control over the device, however, he can explicitly trust malicious certificates. Or, in the worst case, someone could have been able to compromise a certificate authority.

Additionally, an attacker can run the app on their device and explicitly trust their certificates. This doesn’t particularly help him/her to steal any data from other users, but it allows the attacker to see the messages being sent between server and client. This means the malicious player can reverse engineer the API.

To prevent these mechanisms you can pin your SSL certificates. SSL Pinning can be added to your mobile app by including a list of valid certificates (or its public keys or its hashes) in your app bundle. The app can, therefore, check if the certificate used by the server is on this list. This makes sure that not only the OS but also your app trusts this certificate.

In that way, you avoid more complex man-in-the-middle attacks but also introduce some new risks: since the app needs to hardcode the trusted certificates, the app needs to be updated if a server certificate expires. Otherwise, your app won’t be able to connect to your server and there is no way to remotely solve this problem (without introducing other security risks). To avoid such a situation, it is advisable to pin the future certificates in the client app before release.

Prevent unauthorized access to your API

You might also want to make sure that only your mobile app can use your server’s API and block all other requests.

Many apps use certain API tokens/secrets to achieve that. This is a not safe at all, though. Hackers can easily reverse-engineer that mechanism and reproduce it: for example, attackers can pretend to be your app by sending exactly these API tokens/secrets (try to avoid them in general) manually via their program.

To prevent these mechanisms you need to have control over the app and its server. The client then needs to authenticate himself by sending some sort of generated token or by signing the request. The server then validates the request and replies only to the real app.

There are also more advanced checks for this and both Apple and Google provide safe solutions for exactly this problem.

End-to-end encryption

Ok, now we can securely transfer data between our client and our servers. Our clients are protected by the operating system and its encryption mechanisms.

Sounds like we are done, no? Not really! What happens if your servers get hacked? If we store all the sensitive data unencrypted on our servers this would be a disaster. And even if the data is encrypted hackers might be able to get access to the encryption keys and decrypt all the data.

The best way to protect against these kinds of attacks is by using end-to-end encryption. It makes sure that only communicating client devices can decrypt the messages. This could mean in the case of a messaging app that only the receiver can decrypt the sender’s message and in the case of a health database app that only devices owned by the same person can decrypt the data. No other parties can decrypt the data, not even the service provider.

By not being able to decrypt the data yourself, this protects against attacks where your encryption keys are targeted. You don’t have them and only the user’s devices can access these decryption keys. Even if some people get hacked or if the service provider gets hacked it doesn’t give the attacker access to any other person’s data.

End-to-end encryption is not easy to implement and we advise everyone that plans to implement that in their apps to consult a second party to validate the implementation of their end-to-end encryption.

Remote Notifications

Remote notifications are notifications sent by a server application to the users’ devices. These notifications often contain already a lot of information and you need to send them to one of Apple’s APNS or Googles Firebase servers so that they will actually distribute the messages for you.

This means the OS host would theoretically be able to intercept and read all these messages. Besides that, this would also mean that our servers need to hold all of this information. That would be against our idea of storing as much data as possible locally on the devices instead of the remote server.

To solve both of these problems we can use silent remote notifications which don’t send actual messages to the users’ device. They will simply be used as a wakeup call for the app. The app can then fetch the necessary information from the local device and show the actual message to the user.

A similar technique is also used by apps like WhatsApp to enable end-to-encryption. Instead of sending a clear-text message, the server sends the encrypted message to the client. The client then then decrypts the message and shows it to the user. This prevents Google, Apple and WhatsApp itself from seeing the actual message.

Best practices for securing your code

Static Code Analysis and Static Application Security Testing

You might have heard of static code analysis before. It is often used to find a subset of bugs like memory leaks in your code, but there is another important use-case for it: searching for common security vulnerabilities.

There are many (sometimes paid) static code analyzers that can evaluate your code and point to common security vulnerabilities. The usage of these analyzers if often referred to as Static Application Security Testing or White-Box Testing.

These tools look at the code before it’s been compiled without executing anything. They can easily be integrated into the software development life-cycle. By detecting security flaws during the first stages of development, issues can be resolved quickly without complex update cycles.

Code obfuscation

Code obfuscation is a technique of transforming your source code into something that is difficult for humans to read. This is done mostly by automated tools before building the application.

This doesn’t make your code itself more secure. The only goal is to complicate the process of reverse-engineering your source code from a compiled application. Knowing how your source code works makes it vulnerable to certain hacker attacks.

Other common security risks

All of these mentioned best practices are for nothing though, if you don’t also watch out for the following things.

Third-party frameworks

3rd party SDKs or frameworks can be a huge security risk for your applications. Since they get compiled with your app and run in the same sandbox they have the same rights as your app. This means a malicious SDK can fetch your users’ location if you asked for this permission or even read from your application data storage or keychain. DON’T simply trust every library that you find on the internet.

Analytics

Less is more: especially in combination with third party analytics SDKs. Even if you implement your own analytics solution you should be careful which information you want to collect. Analytics data can often already be enough to identify users or to be able to access their data. E.g. an analytics framework recording your screen for crash reports can read your users’ login credentials.

Logging

We as developers log to the console for debugging purposes while developing software, but most developers ignore the fact that logs are (at least on iOS) actually by default public and everyone who attaches a phone to a PC can read the console logs. You should avoid logging sensitive information and use system features such as os_log with placeholders to hide private information in debug messages.

Please keep in mind that you cannot always be certain if you logged your users’ private information: Imagine you would log the user’s clipboard content to the console. Your user then opens a password manager to copy & paste his password. That would mean, attackers can read your user’s clear text password in the console.

Conclusion

Creating safe mobile apps is tough, but there are ways to make your apps more resilient against attackers. Protecting user data must a high priority and should never be ignored.

Implementing the best practices mentioned above and avoiding common security risks allows you to create safer applications.

In general, we can recommend you to go through this basic checklist and make sure you can tick off all of these points for your app:

- Only collect data you actually need

- Use higher levels APIs provided by the OS if possible

- Secure your storage on less secure OS-versions manually

- Store very sensitive information like credentials and certificates in the Keychain / Keystore

- Don’t build your own encryption mechanisms

- Use HTTPS for any kind of communication between client and server

- Avoid 3rd party SDKs if you cannot trust them 100%

- Consult a second-party to validate your security concept

Even if you implement all these best practices in your app this doesn’t mean that your app is fully bullet-proof. One simple mistake can break your whole security concept.

If you want even more in-depth technical details, check out our two follow-up articles about best practices for iOS security and Android security.